Three-step expert framework for scaling AI agents in R&D

Most engineering organizations are running AI pilots. Very few are turning them into something that actually moves the needle. Here is what the ones getting it right are doing differently.

“An agentic AI system is a network of specialized AI agents that executes, evaluates, and iterates on engineering tasks autonomously without requiring human input at each step. Unlike a single AI tool, an agentic system coordinates across domains, passes work between agents, and manages entire workflows end to end.”

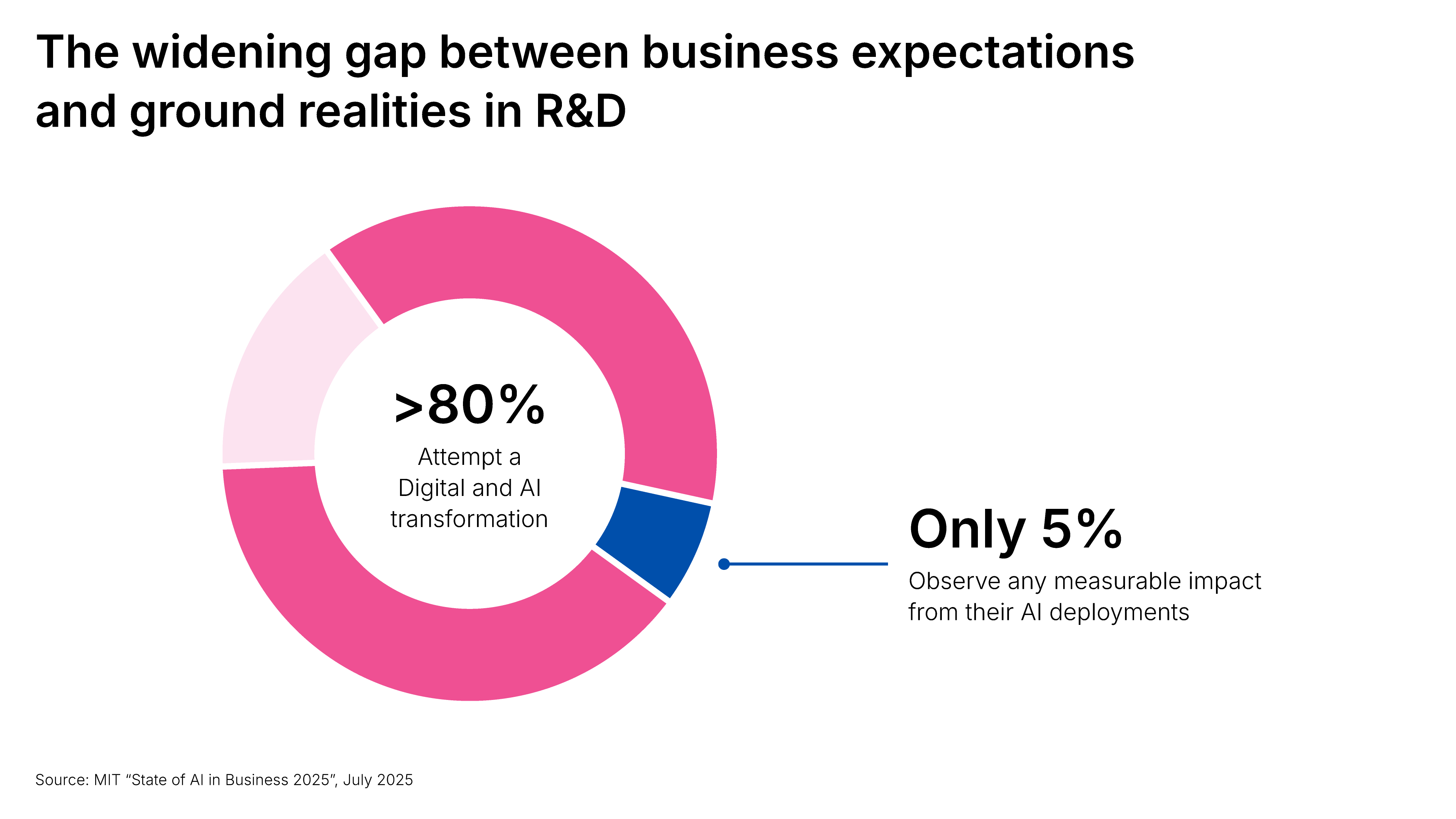

The widening gap between business expectations and ground realities in R&D

80% of organizations running AI in R&D (Source: AI pilot to AI workforce webinar from McKinsey and Synera experts) are failing to capture real value from it. And it has less to do with the technology itself and more to do with how most organizations are failing at capturing the real use case, handling change management and making a plan to scale than just to showcase.

The R&D function was already under extraordinary pressure before AI entered the picture:

- Chinese OEMs are bringing new vehicles to market in 18 to 24 months, while Western counterparts still average four to five years — nearly twice as long, according to Manufacturing Digital, and two to three times slower, per AlixPartners

- Product complexity is accelerating across software and hardware at the same time

- Cost pressure is mounting while engineering headcount and budgets stay flat

- Geopolitical volatility and supply chain fragmentation are adding risk at every development stage

AI promised a way through all of this. And it can deliver, but not through pilots alone. Most organizations have accumulated proof of concepts, dashboards, and isolated use cases that have not added up to a structural shift in how engineering work gets done.

That is the gap between AI as an experiment and AI as a competitive advantage. It is the gap Synera and McKinsey address directly in this webinar, with a clear framework for what it actually takes to scale.

Marc Uth is a Senior Account Executive and Strategic Partnerships lead at Synera and has been instrumental in bringing purpose-built Agentic AI systems to solve productivity challenges to notable manufacturing organizations via Synera's Agentic AI platform for engineering. The platform connects with over 80 engineering tools, from CAD and FEA to PLM and costing systems, and automates the workflows that run between them.

Dr. Fabian Lensing is an Associate Partner at McKinsey & Company, specializing in R&D transformation across automotive suppliers, commercial vehicle OEMs, and advanced industry. He has spent nearly a decade helping engineering organizations close the gap between AI ambition and AI impact.

Together, they outlined a clear path from isolated pilots to a functioning AI workforce. Here is what they covered.

Why most AI pilots never scale

Before we explore solutions, it helps to understand why so many AI pilots stall. Synera and McKinsey point to three consistent failure patterns:

- Wrong use cases: Teams choose what is technically exciting rather than what sits on the critical path. A tool that speeds up one task by 80% sounds great, until you realize that task is only 5% of the workflow.

- Point solutions, not connected workflows: Individual agents solving individual problems do not compound. Value comes from agents that connect across tools and hand work over to each other without human intervention at every step.

- The human side gets ignored. Pilots drive excitement. But without a strategic rollout, training, and dedicated experts to validate the AI results, adoption drops and the pilot dies quietly.

Uncovering the right use cases rarely happens bottom-up. Without management guidance, teams default to what is familiar or technically interesting instead of what moves the needle. A structured value assessment helps engineering and R&D leaders identify where AI will have the most transformational impact.

What agentic AI actually means for engineering

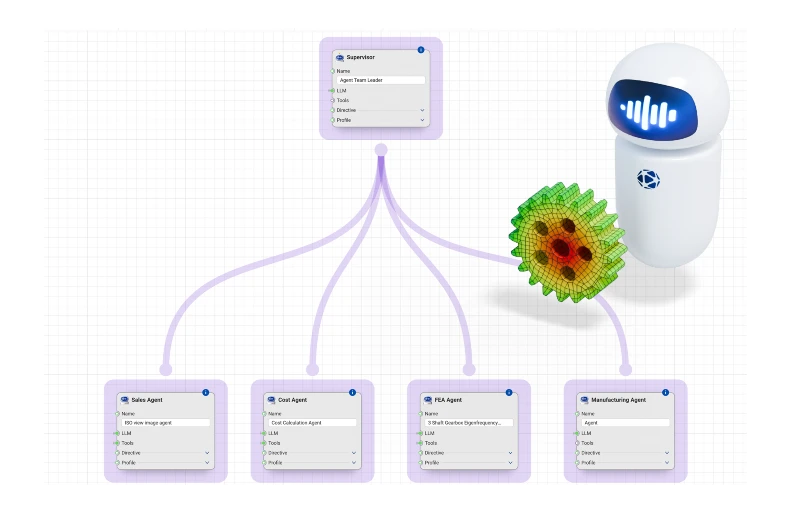

The term gets used loosely, so a quick definition is worth it. Here is what differentiates an AI tool from an Agentic AI system:

A tool executes a task when a human tells it to. An agent executes a task, evaluates the result, decides what to do next, and iterates, without waiting for a human at each step. The difference is not subtle. Scale that up across a network of specialized agents, each owning a domain, sharing context, and handing work to the next without pause, and you have an Agentic AI system that runs entire engineering workflows end to end.

It is the gap between AI that speeds up one step and AI that removes the coordination overhead between all of them.

In engineering R&D divisions, delays from waiting on a simulation result, team alignment, and reworking from a late finding compound silently.

An Agentic AI system eliminates that latency at every junction. Tools that previously could not communicate pass work between each other seamlessly. Specialists who previously waited on each other are unblocked. The critical path shortens because the gaps between the work have been removed.

A well-designed Agentic AI system in engineering combines three things:

- A reasoning core: A large language model that reads requirements, evaluates outputs, and decides what to do next

- Company-specific knowledge: Design guidelines, product history, and requirements databases with the institutional knowledge that currently lives in people's heads

- Tool integrations with instructions: Connections to CAD, simulation, PLM, and costing tools, paired with explicit logic for how to use them in sequence

Agentic AI systems that work with a team of specialized agents go further. Let us explore the example of an Agentic system that manages a workflow right from design ideation to costing.

In this case, a supervisor agent coordinates specialists: one for requirements, one for geometry, one for simulation, one for cost. They work in parallel, negotiate, and only escalate to a human when the situation genuinely needs one. A workflow that previously took four handovers and two weeks runs autonomously.

This is just one such example. What this looks like on a real engineering problem, with real tools running in real time, is demonstrated live in the webinar recording.

The McKinsey three-phase framework for scaling AI agents in R&D

One of the most practical things to come out of the session is a three-phase framework that McKinsey uses with engineering organizations to move from pilot to production. While the phases seem straightforward, the execution behind each one is where most organizations need support.

Phase 1 - Assessment of the workflows:

Before building anything, define what your R&D function should look like in five years. Map your current workflows. Identify which use cases have enough value, feasibility, and organizational excitement to be worth pursuing first. All three criteria matter. Miss one and you will either fail to get funded, ship, or get anyone to use it.

Phase 2 - Build a proof of value, not a Minimum viable product (MVP):

This framing matters more than it sounds. An MVP optimizes for speed of learning the technology and workflow. However, building an AI pilot that serves as a proof of value, optimizes for the business case that unlocks the next round of investment. The distinction in approach changes what you build, how you measure it, and how you sell it internally.

Phase 3 - Scale like a transformation program

Because that is what it is. Bringing AI into the engineering process fails when approached as a plug-and-play model because it requires a complete transformation of the workflow before bringing AI in.

Building an AI workforce requires value tracking, leadership accountability, change management, and a model for finding early believers and using them to bring the rest of the organization along.

The full detail behind each phase, including the tools McKinsey uses to run the use-case selection process and the governance model for scaling, is covered in the webinar recording.

Want to see the full framework in action? Watch the webinar on AI pilots to AI workforce and get the complete playbook.

How to scale AI pilots in engineering R&D? 6 tips from McKinsey and Synera experts

Most AI programs stall not because the technology fails, but because the organization around it was not built to scale. Here is what the companies getting it right are doing differently.

Build a roadmap before you build anything else

Start with one clear question: what does your R&D function look like in five years? Then map your current workflows against what is possible today and identify the gaps. Without this, you end up with pilots that each make sense individually but add up to nothing. The roadmap is not a formality. It is how you decide where to put your weight.

Why it matters: Without a roadmap, AI investments stay scattered, and scattered investments never compound into competitive advantage.

Use platforms that connect to what you already have

Integration debt is one of the biggest hidden costs in engineering AI. Agents that work in isolation but cannot talk to your existing tools deliver far less than their potential. Synera connects with over 80 engineering tools, including CAD environments, FEA solvers, PLM systems, and costing databases. That breadth matters because value in R&D does not come from automating one tool. It comes from removing the handovers between all of them.

Why it matters: An AI system is only as powerful as the tools it can reach, and in engineering, the value is in the connections between tools, not the tools themselves.

Find your AI ambassadors early and invest in them

In every organization, there are engineers who are already experimenting, already curious, already halfway there. Find them. Give them time and resources to go deep on the first use cases. Then make them visible. They will do more for adoption than any top-down communication campaign ever will.

Why it matters: Adoption does not spread from the top down in engineering organizations; it spreads peer to peer, and that requires the right people carrying the message.

Rethink your R&D operating model around Agentic AI

Most R&D organizations are still structured around one assumption: every workflow needs a human at every step. However, Agentic AI breaks that. In domain-heavy functions like structural analysis or thermal management, expert-led AI teams let specialists focus entirely on judgment and validation while agents handle simulation setup, iteration, and results processing.

At the program level, an AI project control tower tracks dependencies, flags risks, and coordinates across multiple programs simultaneously, giving program managers decision-ready information instead of data to chase down. Based on your organization’s needs, you can also consider a system where a virtual CAE and simulation shared service runs FEA, CFD, and multibody dynamics workflows on demand.

Why it matters: With agentic AI systems, application engineering holds the potential to become 80 to 90% autonomous, where agent works from a given brief, and engineers focus solely on creative problem solving.

Treat change management as a core deliverable

AI adoption follows a predictable pattern: it climbs at launch, then drops. Not because of resistance to AI, but because there is no structure to sustain new behavior once the excitement fades.

What prevents the drop:

- Leaders who role-model AI use, not just endorse it in presentations

- Honest answers to the career questions engineers are already asking

- A clear organizational narrative about where this is heading and what it means for their roles

Why it matters: The most sophisticated agentic AI system delivers zero value if engineers do not use it, and they will not use it without the right structure around them.

Build AI into your performance system

Training programs are necessary bfut not sufficient. If AI adoption does not show up in how leaders are measured, it will always lose to whatever does. The organizations scaling fastest have AI usage and ROI targets in senior leader agreements, not as a side initiative but as part of how the business runs. That signal travels fast. Its absence travels faster.

Why it matters: What gets measured gets done, and if AI adoption is not in the performance system, it will always be treated as optional.

What more is inside the AI pilots to AI workforce webinar?

These six principles give you the direction. The webinar gives you the depth.

In the full session, Marc and Fabian go further to explore and detail:

- The complete McKinsey three-phase framework, including the use-case selection tools and governance model

- A live Synera platform demo showing a agentic AI system handling a real engineering workflow from requirement to validated output, autonomously

- Real client data from a tier-1 automotive supplier on what happened to their RFQ-to-quote timeline and win rates after deploying agentic AI with a team of agents

Early movers on agentic AI are not just catching up. They are pulling ahead and setting the pace. If you are deciding where to place your bets in R&D over the next twelve months, this is the session to watch.