TL;DR

Gartner's 2025 assessment of 20 AI use cases for engineering gives leaders a defensible framework for prioritizing investment. Likely Wins such as predictive costing, requirements management, and PMI generation, offer proven ROI and manageable integration. Calculated Risks, including simulation governance and lifecycle assessment, offer competitive differentiation for organizations willing to invest in stronger data foundations first. But Gartner evaluates each use case in isolation. The organizations actually reaching production are doing something different: they are connecting these capabilities into orchestrated workflows that span CAD, simulation, and costing tools, without manual handoffs between them. That orchestration layer is what separates a collection of pilots from a transformation. At Synera, it is the core of what we build.

Choosing the Right AI Use Cases for Engineering

Choosing the right AI use cases for engineering is now the defining question in most technology roadmap conversations, and the consequences of getting it wrong are expensive. Pilots that never reach production. Point solutions that still require manual handoffs. Investments that impress in demos and stall in deployment. According to a 2024 O'Reilly report, only 26% of AI initiatives advance beyond the pilot phase, with 74% stalling due to operational or organizational barriers. IDC research puts it even more starkly: for every 33 AI proofs of concept launched, only four graduate to production.

In July 2025, Gartner published its first structured evaluation of AI applications for design and engineering in manufacturing, assessing twenty use cases across two dimensions: business value and implementation feasibility. The framework is useful. But it is also incomplete, and the gap it leaves open explains why some engineering organizations are reaching production while others remain stuck at proof of concept.

I lead AI at Synera, an AI agent platform purpose-built for engineering teams at companies like Hyundai, BMW Group, Airbus, and Knorr-Bremse. Gartner's assessment aligns closely with what we observe succeeding in practice. This article walks through the research findings, adds practitioner context, and identifies the variable that Gartner's framework does not address: process orchestration. It is the difference between deploying AI and transforming how engineering work gets done.

How to evaluate AI Use Cases for Engineering?

Gartner's report on AI Use-Case Assessment for Design and Engineering in Manufacturing, published in July 2025 evaluates twenty AI applications across two dimensions:

- Business value: the degree to which a use case delivers measurable impact on productivity, cost, quality, or speed

- Implementation feasibility: how mature the technology is, how complex the integration requirements are, and how significant the adoption barriers tend to be in practice.

From those two dimensions, Gartner organizes the twenty use cases into three categories:

- Likely Wins: These have high business value, high implementation feasibility. Technology is mature, ROI is demonstrable, and adoption barriers are manageable. These are where most organizations should begin.

- Calculated Risks: These have high business value, variable or lower feasibility. Significant potential, but requiring stronger organizational commitment, longer implementation timelines, or more developed data foundations before they deliver.

- Marginal Gains: These have lower business value relative to implementation effort. These are excluded from this analysis: for most engineering organizations navigating resource constraints, the investment-to-return ratio does not justify prioritizing them at this stage.

Likely Wins vs. Calculated Risks: At a Glance

Gartner's Likely Wins: The AI Use Cases Ready to Deploy Now

Gartner identifies eight use cases where the combination of technology maturity, demonstrable ROI, and manageable adoption barriers makes them the strongest starting points. Based on working with engineering organizations over the past twelve months, I believe three practical criteria determine whether a Likely Win actually reaches production:

- The balance between model capability and the tool integration capability surrounding it as AI is only as useful as its connection to the engineering systems where work actually happens.

- The integration layers required for enterprise-scale rollout, including authentication, data governance, and compatibility with existing PLM and CAx environments.

- How close the selected task sits to a business-critical process: the nearer to revenue, speed to quote, or product quality, the stronger the case for investment and the clearer the measurement.

Against those criteria, three Likely Wins stand out as the strongest entry points for most engineering organizations:

Predictive Costing

Predictive Costing allows engineers to estimate manufacturing costs directly from CAD data and receive recommendations for cost reduction before resources are committed. Gartner notes this capability has existed since the 1980s, but generative AI has significantly accelerated its effectiveness.

Organizations using similarity-based approaches where AI searches historical project data to find comparable components, report meaningful reductions in estimation time. The combination of structured historical data, repeatable geometry inputs, and direct connection to commercial decisions makes this one of the most feasible Likely Wins to operationalize.

Requirements Management

Requirements Management addresses a persistent problem in engineering organizations: design requirements scattered across documents, databases, and individual expertise. AI can automate the capture and contextual organization of requirements, reducing the rework caused by teams operating from inconsistent or outdated information.

Gartner notes this use case is already in production at leading manufacturers, though it requires training to reflect organization-specific requirements structures. Where it works, it eliminates one of the most common sources of late-stage design changes.

PMI Generation and Diagnosis

What is PMI? Product Manufacturing Information (PMI) refers to the annotations embedded directly in 3D CAD models that communicate manufacturing and inspection requirements, including geometric dimensioning and tolerancing (GD&T), surface finish specifications, material callouts, and assembly notes. PMI replaces or supplements traditional 2D engineering drawings by attaching this data directly to the model geometry, making it machine-readable and usable throughout downstream manufacturing and quality processes.

PMI Generation and Diagnosis automates the creation of product manufacturing information (geometric dimensioning and tolerancing), and flags errors before they propagate downstream. Beyond efficiency gains, this use case addresses a growing concern: as experienced engineers retire, the tacit knowledge embedded in their PMI decisions leaves with them.

AI-assisted generation preserves that institutional knowledge in a scalable, auditable form.

Other Likely Wins include: Design concept generation, content extraction from engineering drawings, drawing verification, manufacturing process planning, and parts search for design. Each combines proven technology with clear efficiency benefits and identifiable integration paths.

Calculated Risks: High-Value AI Investments That Require Stronger Foundations

Gartner's Calculated Risks offer significant potential but require clean historical data, supplier data, and ML training datasets, greater organizational commitment, or tolerance for longer implementation timelines. They represent where competitive differentiation emerges, for organizations that are willing to invest in the foundations before they invest in the capability.

Design for Quality Assessment

Design for Quality Assessment uses AI to analyze designs and recommend improvements for reliability and durability before physical testing begins. Gartner notes this requires substantial investment in machine learning to capture the multiple dimensions of quality criteria across materials, manufacturing processes, and operating conditions.

For organizations where quality failures are expensive including but not limited to automotive crash performance, aerospace structural qualification, the upside is significant. The prerequisite is a sufficiently rich history of design-quality outcomes to train against.

Simulation Model Creation and Governance

Simulation Model Creation and Governance addresses the preparation bottleneck that precedes every finite element analysis: defeaturing, surface detection, and mesh generation.

These steps are time-consuming, specialist-dependent, and highly repetitive across model variants. AI can automate the preparation workflow, allowing simulation engineers to focus on analysis and interpretation rather than setup.

Gartner frames this as a feasibility challenge since the variability of geometry and the precision requirements of simulation make full automation difficult, but organizations that solve it unlock significant capacity in their most constrained technical resource.

Design for Life Cycle Assessment

Design for Life Cycle Assessment automates environmental impact analysis throughout a product's life cycle, embedding sustainability evaluation into the design phase rather than treating it as a compliance exercise at the end. Gartner identifies data availability as the primary constraint: many organizations lack sufficiently detailed material and process input data, and changes to suppliers or materials can undermine model accuracy. For organizations with mature supplier data infrastructure, this is an increasingly strategically relevant capability as regulatory requirements tighten.

Other Calculated Risks include: regulatory compliance checking, materials discovery, design failure mode and effect analysis (DFMEA), and multi-objective design optimization. Each carries meaningful implementation complexity, but each also addresses a process that currently consumes significant specialist engineering time.

IS YOUR ORGANIZATION READY FOR A CALCULATED RISK?

Three questions to test readiness before committing:

(1) Do you have sufficient historical data — structured, labeled, and accessible — to train against?

(2) Can your team tolerate an implementation timeline measured in quarters rather than weeks?

(3) Is there executive sponsorship to sustain the investment through a longer proof-of-value cycle?

If the answer to any of these is uncertain, starting with a Likely Win and building toward the Calculated Risk is a more reliable path.

The Gap Gartner's Framework Leaves Open: Process Orchestration

Gartner's framework evaluates use cases individually. In practice, the highest-value AI implementations combine multiple use cases into orchestrated workflows that span tools, data sources, and engineering roles — and the distinction matters enormously.

The typical engineering environment looks like this:

- Processes include CAD systems, Simulation tools, and Costing software – all coming from individual vendors.

- Requirements scattered across documents and databases.

- Engineers spending significant time moving between tools, translating outputs from one system into inputs for another, and manually coordinating handoffs that should be automatic.

That coordination overhead is the real productivity constraint beyond the capability of any individual AI model.

The organizations achieving the strongest results handle this differently:

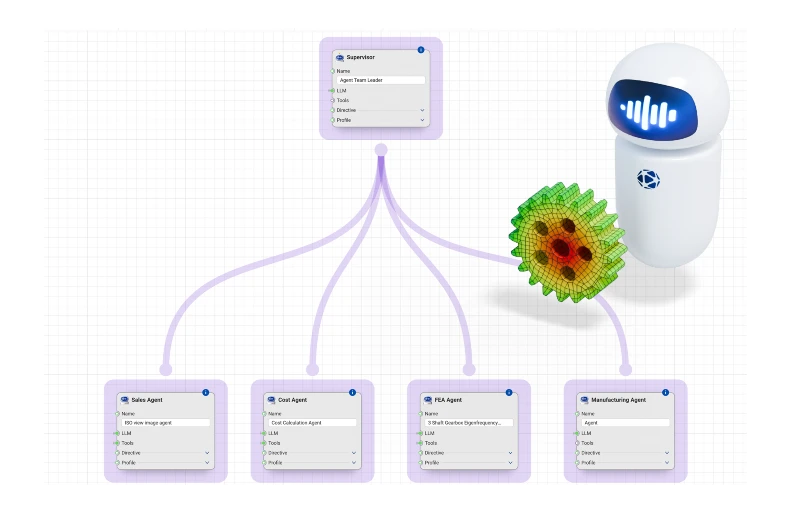

- AI is deployed across multiple use cases simultaneously, not isolated to a single function

- Agents orchestrate across tools and data sources, combining parts search, cost estimation, and simulation preparation

- Workflows are linked: the output of one agent automatically becomes the input to the next

- No engineer needs to touch the handoffs between steps

- This "orchestration layer" turns isolated AI capabilities into autonomous, end-to-end workflows

- Results in measurable productivity gains across the pipeline

- A coordinated agent team can:

- Read a STEP (Standard for the Exchange of Product Model Data ) file

- Query historical project data

- Run a simulation preparation sequence

- Output a cost estimate

- All of the above happens without manual intervention at any stage

An AI agent platform like Synera, purpose-built to connect engineering tools into orchestrated workflows, delivers fundamentally different value from four separate AI tools that each require manual handoffs between them.

Notably, Gartner's highest-rated use cases for implementation feasibility, predictive costing, requirements management, and parts search for design, are precisely the ones best suited for orchestration. They involve structured data, repeatable processes, and clear integration points with adjacent workflows. An organization that deploys these three as isolated tools captures a fraction of the value available from connecting them into a single coordinated process.

AI for Engineering Leaders: How to Prioritize AI Investments

The Gartner framework provides the structure. The practical question is how to use it as a decision tool rather than a reading list. Having observed what separates organizations that reach production from those that remain in pilot, five patterns consistently distinguish the successful ones:

- They deploy where errors are tolerable and reversible. The most reliable entry points target high-volume tasks where AI mistakes create friction rather than failure. Simulation preparation, cost estimation, and requirements organization are activities where catching errors is part of a standard engineering workflow. Organizations that attempt to deploy AI for safety-critical or irreversible decisions first face adoption barriers that have nothing to do with the technology.

- They measure against current state, not theoretical baselines: According to a 2024 McKinsey survey, fewer than one in six companies report achieving measurable business impact from AI: a gap that often traces back to ROI frameworks built on theoretical benchmarks rather than actual before-and-after comparisons. The strongest business cases quantify improvement over how work is actually done today.

- They prioritize time savings over technological sophistication. McKinsey's 2024 global survey on AI adoption found that productivity improvement and reduction of manual task hours are the dominant drivers of enterprise AI investment, consistently cited ahead of novel technology as a motivation. Engineering leaders are not seeking impressive capabilities. They are seeking capacity returned to their teams.

- They scope workflows first, not technologies. Successful organizations begin by identifying where time is lost (handoffs between tools, waiting for specialist availability, repeated setup work), and then apply AI to those specific constraints. Technology selection follows the problem definition. Not the other way around.

- They invest in orchestration, not individual capabilities. The organizations achieving production deployments recognize that engineering work spans multiple tools and data sources. They invest in the orchestration layer that connects tools and AI capabilities into coherent workflows, rather than deploying isolated point solutions that still require manual handoffs to function.

How can engineering organizations start with AI use cases?

- The practical starting point: identify one likely win that addresses a recognized pain point in your organization, one that is close to a business-critical process and where the integration path is clear.

- Reach production within a single quarter.

- Measure results against current state.

- Use that foundation to make the case for the next investment, and begin thinking about how the use cases connect into orchestrated workflows, rather than treating each one as a standalone deployment.

From Gartner's Framework to Engineering Reality

Gartner's framework offers a structured, third-party way to evaluate AI investments in engineering, useful when options are multiplying faster than evidence. The Likely Wins provide a defensible starting point. The Calculated Risks show where differentiation is possible for organizations ready to invest.

What the framework does not cover is how use cases connect. That is where transformation happens.

At Synera, we have seen this play out directly. At Knorr-Bremse, automating the FEM model preparation workflow (defeaturing, surface detection, and meshing), eliminated human error from each preparation stage and improved the reliability of analysis inputs, while freeing simulation engineers from repetitive setup work entirely. Across Synera's deployments with global OEMs and Tier 1 suppliers, the consistent pattern is the same: the productivity gains from connected, orchestrated AI workflows are substantially larger than the sum of gains from the same capabilities deployed in isolation.

Synera integrates with more than 80 engineering tools across CAD, simulation, costing, and PLM systems into a single orchestration layer. Engineers at companies including BMW Group, Airbus, Hyundai, Volvo Trucks, and Miele use it to run multi-step AI workflows that span the full development process, without switching tools or managing handoffs manually. The recent $40M Series B financing reflects the direction the industry is moving: toward AI that operates across the engineering lifecycle, not just within it.

The real question is not whether to invest in AI for engineering, but how: use cases that link into workflows, workflows that span tools, and tools that share context through an orchestration layer built for engineering.

The full Gartner report is available on the Synera websiter alongside a practical guide for applying the framework to your own priorities. Download the report to learn more.

About the author:

Ram Seetharaman is Head of AI at Synera, leading the company's multi‑agent and agentic AI initiatives that are redefining how engineering teams design, simulate, and automate complex products. With a background in Computational Mechanics from the University of Stuttgart and five years applying ML and AI to engineering workflows, he bridges deep technical R&D and product strategy. At Synera, he owns AI strategy, roadmap, and implementation, translating domain expertise into AI‑driven workflows that accelerate simulation, design-space exploration, and automation at scale.

Before Synera, Ram contributed to award‑winning motorsport and aerospace projects as a Digital Twin and structural optimization engineer, became a World Champion in 2023, and worked at Volocopter on safety‑critical battery crash simulations.